OpenAI has introduced a groundbreaking series of language models—GPT-4.1, GPT-4.1 mini, and GPT-4.1 nano—accessible via its API. These models build upon their predecessors, GPT-4o and GPT-4.5, with significant advancements across various technical metrics and support for an unprecedented 1 million tokens of context.

Key Improvements and Benchmarks

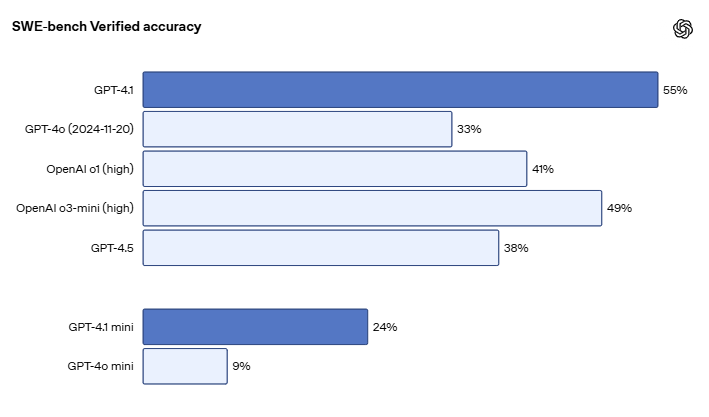

According to OpenAI, the GPT-4.1 model exhibits notable enhancements in coding abilities, instruction adherence, and long-context comprehension. On the SWE-bench Verified benchmark, which evaluates real-world software engineering tasks, GPT-4.1 achieved an accuracy of 54.6%. This marks a remarkable 21-point improvement over GPT-4o’s 33.2% and a 26.6-point rise compared to GPT-4.5.

Additionally, GPT-4.1 demonstrates a 10.5-point advancement over GPT-4o on Scale’s MultiChallenge instruction benchmark. These metrics highlight the model’s superior capability in handling complex instructions and providing accurate responses.

Extended Input Handling

OpenAI has also tested the model’s prowess in processing extensive inputs. All models within the GPT-4.1 family can manage up to 1 million tokens. Internal evaluations, such as OpenAI-MRCR and Graphwalks, indicate that GPT-4.1 performs reliably across long-context tasks, effectively retrieving and reasoning over dispersed information. For instance, GPT-4.1 scored 61.7% on Graphwalks, a benchmark for multi-hop reasoning, significantly outperforming GPT-4o’s 42%.

Variants for Diverse Needs

Beyond the primary model, GPT-4.1 mini delivers comparable performance with lower latency and cost. OpenAI asserts that it matches or surpasses GPT-4o in most intelligence evaluations while reducing costs by an impressive 83%. Meanwhile, GPT-4.1 nano, the smallest and fastest in the series, is tailored for simpler tasks like classification and autocomplete. Despite its size, it achieves high scores, such as 80.1% on MMLU and 50.3% on GPQA.

Enhancements in Code Editing

OpenAI has also emphasized improvements in code editing capabilities. In Aider’s polyglot benchmark, which assesses the ability to generate diffs instead of full-file rewrites, GPT-4.1 surpasses all previous models, including GPT-4.5. The model produces fewer unnecessary edits, reducing from 9% in GPT-4o to 2% in GPT-4.1.

Transition from GPT-4.5 Preview

OpenAI confirmed that GPT-4.5 Preview will be deprecated on July 14, 2025, citing cost and performance enhancements in GPT-4.1 as the rationale for the transition. This decision aligns with community speculation about the temporary nature of GPT-4.5. A Reddit user remarked that GPT-4.5 was merely a preview and not an official version, suggesting that its data collection phase has culminated in the development of GPT-4.1.

Pricing Adjustments and Accessibility

Pricing for GPT-4.1 is approximately 26% lower than GPT-4o for typical queries. Prompt caching discounts have increased to 75%, and long-context usage no longer incurs additional charges beyond standard per-token costs.

The GPT-4.1 family is now available through the OpenAI API, although it has not yet been integrated into ChatGPT, where updates to GPT-4o are still underway.

For more updates and detailed insights, follow us at aitechtrend.com.

Note: This article is inspired by content from https://www.infoq.com/news/2025/05/openai-gpt-4-1/. It has been rephrased for originality. Images are credited to the original source.