Introduction: Why Healthcare Is AI’s Toughest Challenge

Artificial intelligence (AI) is transforming industries worldwide, but nowhere does it face a greater test than in healthcare. With complex medical conditions, deeply personal patient interactions, and strict regulatory frameworks, healthcare demands more from AI than perhaps any other field. The question is not just whether AI can help — but how, when, and where it should be integrated into patient care.

The Promise and Reality of AI in Medicine

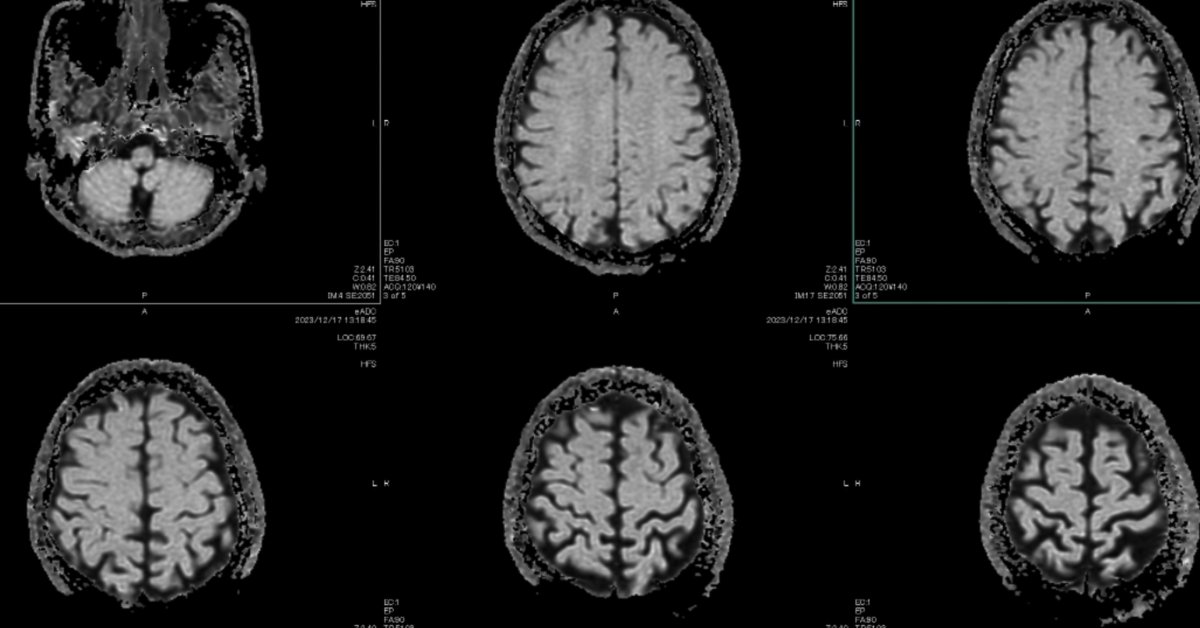

A decade ago, Nobel Prize-winning computer scientist Geoffrey Hinton predicted that AI would quickly surpass humans in reading medical images, even suggesting that training radiologists would soon be unnecessary. Yet, nearly ten years later, radiologists are still a vital part of the medical workforce, and their numbers have grown. Of the 950 AI and machine learning tools approved by the FDA since 1995, 723 are radiology devices. The technology has advanced, but the demand for human expertise remains unyielding.

When challenged on his prediction, Hinton clarified that the issue wasn’t technological progress but rather economic dynamics. As he put it, “Healthcare is a very elastic market. If you allow a healthcare worker to do ten times as much, we’ll just get ten times as much healthcare.” The implication is clear: the appetite for medical care is virtually limitless, and as AI uncovers previously unmet needs, it expands rather than contracts the demand for professionals.

AI’s Strengths, Weaknesses, and the Human Factor

In certain clinical scenarios, AI already matches or even outperforms physicians. Cardiologist and researcher Eric Topol references studies where AI systems have shown superior results compared to human doctors, even when those doctors had access to AI tools. However, Topol cautions that the best outcomes often result from a blend of human and machine intelligence. Sometimes, physicians fall into the trap of automation neglect, sticking to their initial diagnosis despite AI’s suggestions — a sign that collaboration strategies between humans and AI are still evolving.

Not all evidence leans in favor of machines. A recent trial published in Nature Medicine evaluated an AI system’s performance on complex cardiology cases. The findings were nuanced: general cardiologists using AI assistance produced assessments that specialists preferred, with fewer significant errors. Yet, the AI still made serious mistakes in a small percentage of cases, sometimes providing misleading information. Importantly, when challenged by a physician, the AI could correct itself, highlighting the need for human oversight.

At the same time, pitfalls remain. Another study found that the latest language models, such as ChatGPT, made critical errors in medical triage, incorrectly advising patients to stay home in emergencies. The evidence is mixed: sometimes AI alone excels, sometimes the combination of human and machine is best, and sometimes the technology is dangerously unreliable. The real challenge, then, is not simply whether AI works—but knowing when and how it should be used.

A Shift Toward Preventive Medicine

Perhaps AI’s most profound impact won’t be in diagnosing current illnesses, but in predicting and preventing disease before symptoms emerge. Modern medicine is largely reactive, but AI could help shift healthcare upstream. As Eric Topol notes, “The three major age-related diseases — neurodegeneration, cancer, and cardiovascular disease — all take 15 to 20 years to develop.” With the explosion of wearable devices, which continuously monitor health metrics like heart rate and sleep patterns, and advances in analyzing blood proteins, researchers are now able to anticipate health issues far earlier than before.

Despite these advances, gaps remain. Topol highlights the complexity of the immune system, calling for better clinical tools to map immune function. He sees the immune system as a key to understanding and predicting major diseases. The future of medicine may rest not on a single AI breakthrough, but on the integration of AI-driven data from sleep, wearables, and biological markers to enable proactive, personalized care.

Legal, Ethical, and Human Considerations

The adoption of AI in healthcare is shaped by more than just technology. Legal and ethical questions loom large. As Hinton points out, there is a legal asymmetry: if a doctor doesn’t use AI and a patient suffers, there is little recourse. But if AI is used and causes harm, liability can be swift and severe, discouraging early adoption.

Meanwhile, human error remains a major concern. Topol notes that in the U.S. alone, diagnostic errors result in approximately 800,000 cases of disability or death annually, yet public discourse tends to focus on AI’s mistakes rather than those of human clinicians.

Empathy is another unresolved issue. While Hinton believes AI systems can “genuinely have empathy,” Topol disagrees, arguing that machines can simulate but never truly understand empathy. He emphasizes that the essence of medicine lies in the human connection — the knowledge that another person genuinely cares.

Conclusion: Charting a Collaborative Path Forward

AI’s role in healthcare is not to replace doctors but to augment and extend their capabilities. The future will demand careful management of AI’s strengths and limitations, thoughtful integration into clinical workflows, and a steadfast commitment to human compassion. As AI continues to evolve, striking the right balance between technology and humanity will be essential in delivering better, safer, and more empathetic care for all.

This article is inspired by content from Original Source. It has been rephrased for originality. Images are credited to the original source.