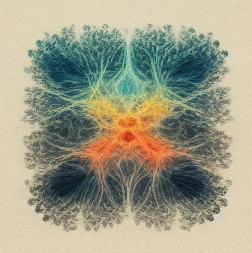

Neural networks have become the cornerstone of various machine learning applications, revolutionizing industries such as image recognition, natural language processing, and more. However, as the complexity of these networks continues to grow, so does the demand for efficient and streamlined models. Neural network pruning offers a solution to this challenge by reducing the size and computational complexity of neural networks without significant loss in performance. In this beginner’s guide, we will delve into the world of neural network pruning, exploring its benefits, techniques, and potential challenges.

Introduction

Neural network pruning involves the systematic removal of unnecessary connections and parameters in a neural network. By eliminating redundant or less important parts of the network, we can achieve a more compact and efficient model. This process has gained significant attention due to its ability to reduce the storage requirements, improve computational speed, and enhance the interpretability of neural networks.

Why Prune Neural Networks?

1. Improved Efficiency: Pruning reduces the size of neural networks, leading to faster computations and less memory consumption. This becomes especially crucial in scenarios with limited computational resources.

2. Regularization: Pruning acts as a form of regularization, preventing overfitting and improving the generalization capability of the network.

3. Enhanced Interpretability: Smaller networks are easier to interpret and analyze, allowing researchers to gain insights into the inner workings of the model.

Different Pruning Techniques

1. Magnitude-based Pruning: This technique involves identifying the least important connections or parameters based on their magnitudes. Pruning can be performed by setting low-magnitude weights to zero or removing them entirely.

2. Structural Pruning: Structural pruning involves removing entire neurons, layers, or sections of a neural network. Its main objective is to simplify the architecture without compromising performance.

3. Iterative Pruning: In iterative pruning, the network undergoes multiple pruning cycles. The process involves gradually increasing the pruning intensity to fine-tune the model’s efficiency and performance.

4. Adaptive Pruning: Adaptive pruning dynamically prunes the network during training or inference based on predetermined criteria. This technique allows the network to adapt and optimize based on real-time data.

Challenges and Considerations

While neural network pruning offers numerous benefits, there are some challenges and considerations to keep in mind:

1. Trade-off Between Size and Accuracy: Pruning can lead to a reduction in accuracy if not performed carefully. Balancing the size reduction with maintaining performance is essential.

2. Pruning Criteria: Choosing an appropriate pruning criterion that accurately captures the importance of connections or parameters is crucial for achieving desired results.

3. Recovery and Re-training: Pruning may require re-training the pruned networks to regain lost accuracy. Careful selection of re-training strategies is necessary to recover performance effectively.

4. Compatibility with Different Architectures: Different architectures, such as convolutional neural networks (CNN) or recurrent neural networks (RNN), may require different pruning strategies due to their unique design and functionality.

Conclusion

Neural network pruning is a powerful technique for optimizing the efficiency and performance of neural networks. By removing unnecessary connections and parameters, pruning results in smaller, faster, and more interpretable models. However, it is important to consider the trade-offs and challenges associated with pruning to achieve the desired balance between size reduction and accuracy preservation. As the field of neural network pruning continues to evolve, researchers are constantly developing new techniques and strategies to push the boundaries of network optimization.