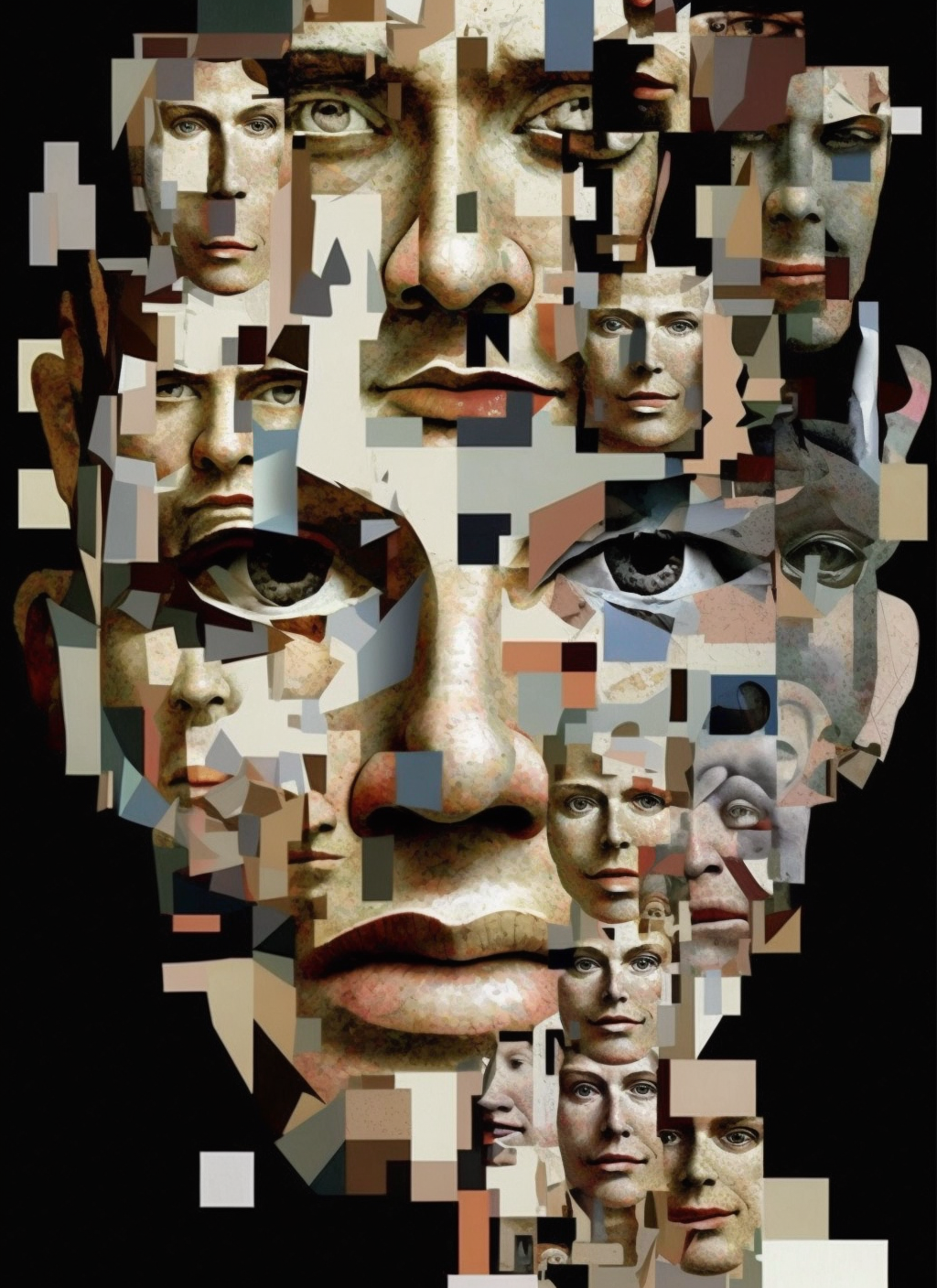

Facial expression analysis is a fascinating field that aims to understand and interpret human emotions through the study of facial movements. By analyzing the subtle changes in facial expressions, researchers and practitioners can gain insights into a person’s emotional state, which has applications in various domains such as psychology, marketing, and human-computer interaction.

In recent years, advancements in computer vision and machine learning have paved the way for automated facial expression analysis. One such tool that has gained popularity among researchers and developers is PyFeat. In this guide, we will explore the basics of facial expression analysis using PyFeat and how it can be used to analyze emotions.

Understanding Facial Expressions

Facial expressions are a fundamental way humans communicate emotions. They are composed of various components such as eyes, eyebrows, mouth, and head position, which collectively convey different emotional states. For example, a smile typically indicates happiness, while a furrowed brow may indicate anger or confusion.

The Importance of Facial Expression Analysis

Facial expression analysis offers several advantages in understanding human emotions. It provides valuable insights into non-verbal cues, which can sometimes be more accurate than verbal communication alone. Additionally, facial expression analysis can be useful in applications such as:

– Emotion recognition: Automatically categorizing facial expressions into basic emotions like happiness, sadness, anger, etc.

– Human-Computer Interaction: Designing user interfaces that can adapt to a user’s emotional state.

– Market research: Analyzing customer reactions to products or advertisements.

– Psychology: Studying emotional states in mental health research.

Introduction to PyFeat

PyFeat is a Python library built specifically for facial expression analysis. It provides a collection of feature extraction algorithms that can be used to capture and represent facial expressions in a machine-readable format. PyFeat offers a range of features, including geometric features, appearance-based features, local binary patterns, and more.

Getting Started with PyFeat

To begin using PyFeat, you’ll need to install the library and its dependencies. You can do this by running the following command in your Python environment:

“`

pip install pyfeat

“`

Once PyFeat is installed, you can import it into your Python script or Jupyter notebook:

“`python

import pyfeat

“`

Facial Expression Analysis Workflow

To perform facial expression analysis using PyFeat, you can follow a typical workflow consisting of the following steps:

1. Data collection: Gather a dataset of facial images or videos labeled with corresponding emotions.

2. Preprocessing: Prepare the data by detecting and aligning faces, resizing images, and normalizing pixel values.

3. Feature extraction: Extract relevant features from the preprocessed images using PyFeat’s feature extraction algorithms.

4. Model training: Train a machine learning model using the extracted features and the labeled data.

5. Model evaluation: Evaluate the trained model’s performance on a separate test dataset.

6. Deployment: Deploy the trained model to perform emotion recognition on new, unseen data.

Each of these steps requires careful consideration and domain knowledge. PyFeat provides the necessary tools and algorithms to simplify the feature extraction part of the workflow.

Feature Extraction with PyFeat

Feature extraction is a crucial step in facial expression analysis as it involves capturing the most relevant information from the facial images. PyFeat offers a wide range of feature extraction algorithms, allowing you to choose the most suitable ones for your task.

Some of the commonly used feature extraction algorithms in PyFeat include:

– Geometric features such as facial landmarks and head pose estimation.

– Appearance-based features such as local binary patterns (LBP) and histogram of oriented gradients (HOG).

– Statistical features such as mean, standard deviation, and skewness of pixel intensities.

You can select and combine these features based on your specific requirements and domain knowledge. PyFeat provides an easy-to-use API to extract these features from facial images or videos.

Training a Model with PyFeat

Once the features are extracted using PyFeat, you can proceed to train a machine learning model. The choice of model depends on the specific task and dataset characteristics. Common models used for facial expression analysis include support vector machines (SVM), convolutional neural networks (CNN), and random forests.

PyFeat integrates seamlessly with popular machine learning libraries such as scikit-learn and TensorFlow, making it easy to train and evaluate models using the extracted features.

Evaluating and Fine-Tuning the Model

After training the model, it is essential to evaluate its performance. This can be done using metrics such as accuracy, precision, recall, and F1 score. Additionally, you may also perform cross-validation to ensure the model’s generalizability.

If the model’s performance is not satisfactory, you can fine-tune the parameters or try different feature combinations to achieve better results. PyFeat provides a flexible framework that allows easy experimentation and optimization.

Enhance Your Facial Expression Analysis with PyFeat

Facial expression analysis is an exciting area of research with numerous applications. With the help of PyFeat, you can delve into the world of facial expressions and gain valuable insights into human emotions. Whether you are a researcher, developer, or practitioner, PyFeat provides the necessary tools and algorithms to enhance your facial expression analysis workflow. Start exploring the power of PyFeat today and unlock the hidden secrets of facial expressions.

Leave a Reply