Artificial intelligence (AI) has rapidly emerged as a disruptive technology, transforming the way businesses operate and revolutionizing industries like healthcare, finance, and retail. However, running AI workloads is compute-intensive, requiring specialized hardware accelerators. This article delves into the different types of hardware AI accelerators and their advantages and disadvantages to help you make an informed decision.

Introduction to AI Accelerators

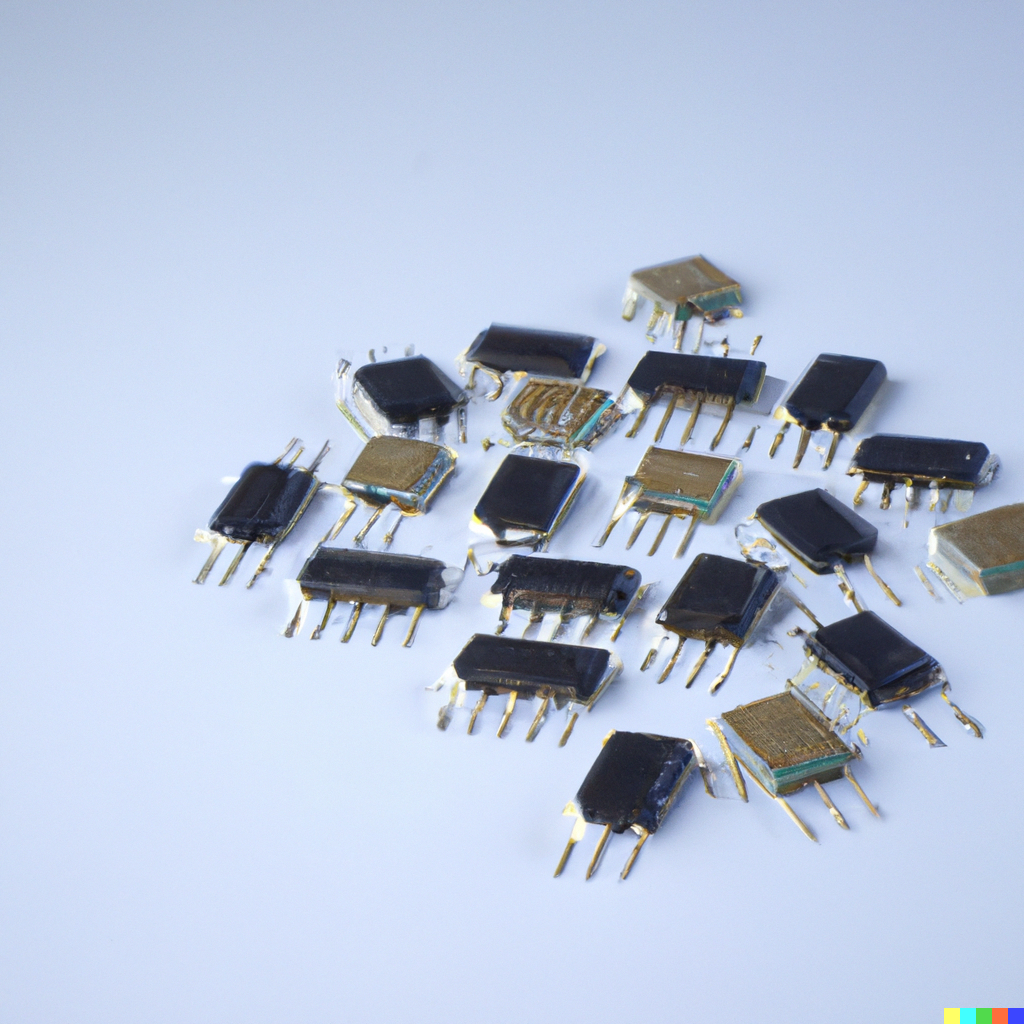

Hardware AI accelerators are specialized processors that help speed up machine learning workloads, offering high performance and low power consumption. There are various types of hardware accelerators in the market, including GPUs, FPGAs, ASICs, and TPUs, each designed for specific workloads.

GPUs (Graphics Processing Units)

Graphics processing units (GPUs) are the most commonly used hardware accelerators for machine learning workloads. These processors have been in use since the late 1990s and are widely available and easy to program. GPUs offer high parallelism, making them ideal for deep learning workloads. Their architecture is well-suited to handle massive amounts of data simultaneously, making them ideal for high-performance computing (HPC) workloads.

FPGAs (Field Programmable Gate Arrays)

Field Programmable Gate Arrays (FPGAs) are programmable logic devices that can be configured to perform specific tasks, making them suitable for custom logic applications. Unlike GPUs, which are general-purpose processors, FPGAs can be designed to meet specific requirements. They offer low latency and high bandwidth, making them ideal for real-time processing applications like computer vision and speech recognition.

ASICs (Application-Specific Integrated Circuits)

Application-specific integrated circuits (ASICs) are custom-designed processors optimized for specific workloads. Unlike FPGAs, which can be reprogrammed, ASICs are designed for a specific purpose and cannot be reconfigured. ASICs offer high performance and low power consumption, making them ideal for AI workloads that require high throughput.

TPUs (Tensor Processing Units)

Tensor Processing Units (TPUs) are Google’s custom-designed AI accelerators optimized for deep learning workloads. TPUs are specifically designed to accelerate TensorFlow, Google’s open-source machine learning framework, making them ideal for applications like image and speech recognition. TPUs offer high throughput and low power consumption, making them an ideal choice for large-scale AI workloads.

Comparison of Hardware AI Accelerators

When choosing a hardware AI accelerator, it is essential to consider the workload requirements, power consumption, and performance. GPUs are the most widely used AI accelerators and offer high performance and low power consumption. FPGAs offer low latency and high bandwidth, making them ideal for real-time processing applications. ASICs offer high performance and low power consumption, making them ideal for specific AI workloads. TPUs are Google’s custom-designed AI accelerators and offer high throughput and low power consumption, making them ideal for large-scale AI workloads.

Advantages and Disadvantages of Hardware AI Accelerators

Hardware AI accelerators offer several advantages over traditional CPUs, including high performance, low power consumption, and lower latency. However, there are some disadvantages to using hardware AI accelerators, including higher costs, limited scalability, and the need for specialized skills to program them.

Conclusion

Hardware AI accelerators are essential for running AI workloads and offer several advantages over traditional CPUs. Choosing the right accelerator depends on the workload requirements, power consumption, and performance. GPUs are the most widely used AI accelerators and offer high