What is cosine similarity and how is it used in machine learning?

In the field of machine learning, cosine similarity is a widely used similarity metric that measures the cosine of the angle between two non-zero vectors. It is particularly useful in applications such as information retrieval, document clustering, and classification. In this article, we will discuss what cosine similarity is and how it is used in machine learning.

What is similarity?

Before we dive into cosine similarity, let’s first define what we mean by similarity. In machine learning, similarity refers to the degree to which two data points are alike. For instance, in a recommendation system, we might want to recommend a movie to a user that is similar to the movies the user has previously watched and enjoyed. Similarly, in clustering, we might want to group together data points that are similar to each other.

Cosine similarity

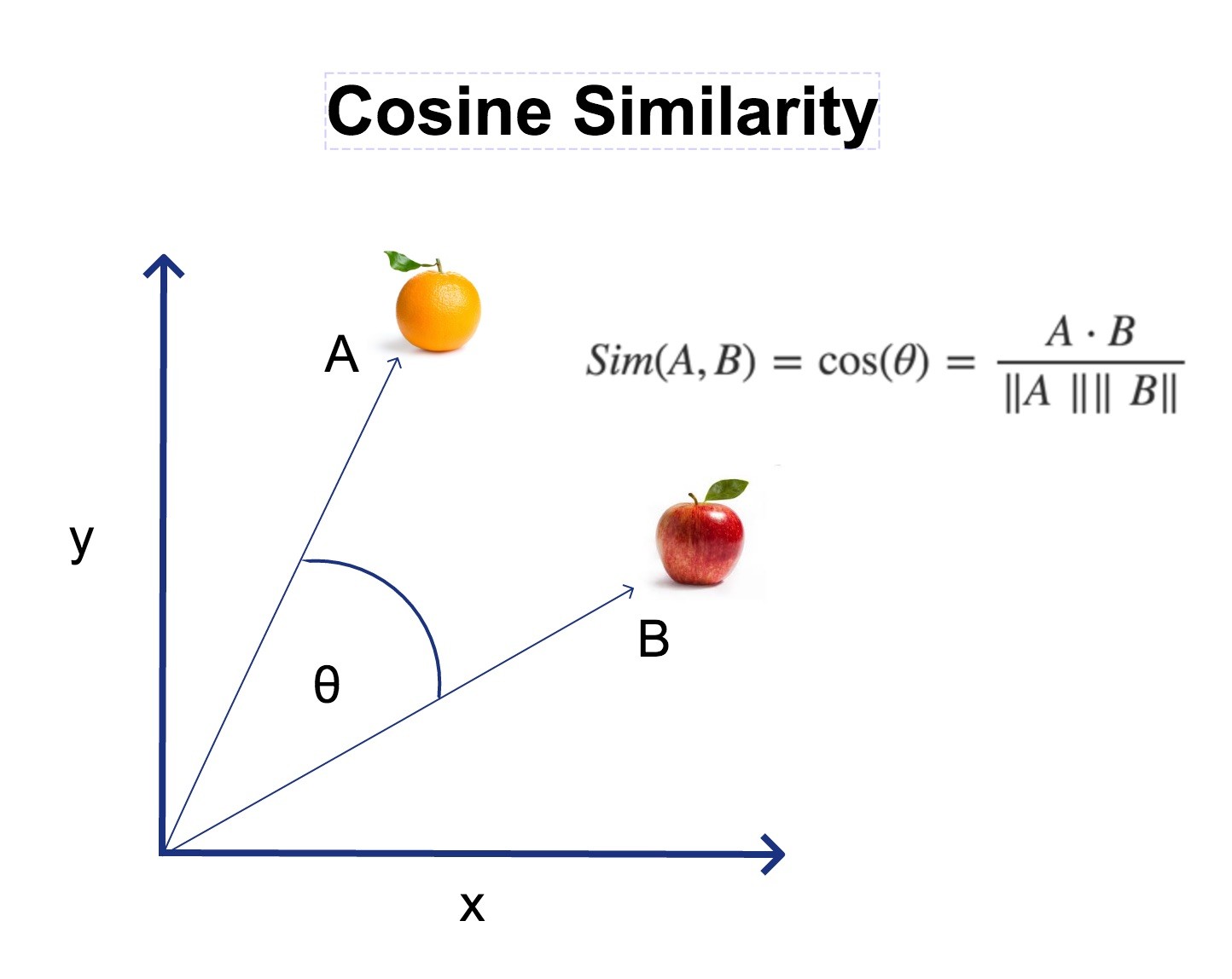

Cosine similarity is a measure of similarity between two non-zero vectors of an inner product space that measures the cosine of the angle between them. The cosine similarity is calculated as follows:

cosine_similarity = (A . B) / (||A|| * ||B||)

Where A and B are two non-zero vectors, “.” represents the dot product of two vectors, and “|| ||” represents the magnitude of a vector. The dot product of two vectors A and B is the sum of the product of their corresponding components. The magnitude of a vector is the square root of the sum of the squares of its components.

Cosine similarity ranges from -1 to 1, where 1 indicates that the two vectors are identical, 0 indicates that the two vectors are orthogonal, and -1 indicates that the two vectors are diametrically opposed. The closer the cosine similarity is to 1, the more similar the two vectors are, and the closer the cosine similarity is to -1, the more dissimilar the two vectors are.

Applications of cosine similarity

Cosine similarity has a wide range of applications in machine learning, some of which are listed below:

- Information retrieval: Cosine similarity is used to determine the similarity between a query and a document in information retrieval systems such as search engines.

- Document clustering: Cosine similarity is used to cluster similar documents together in document clustering algorithms.

- Text classification: Cosine similarity is used to classify text documents into different categories based on their similarity to a set of pre-defined categories.

- Recommender systems: Cosine similarity is used to recommend items to users based on their similarity to previously liked items.

- Image processing: Cosine similarity is used to compare the similarity between images in image processing applications.

Advantages of cosine similarity

Cosine similarity has several advantages over other similarity metrics:

- Scale-invariant: Cosine similarity is scale-invariant, meaning that it is not affected by the magnitude of the vectors being compared.

- Efficient: Cosine similarity is computationally efficient, making it suitable for use in large-scale machine learning applications.

- Robust to outliers: Cosine similarity is robust to outliers, meaning that it is not affected by data points that are significantly different from the rest of the data.

- Works with high-dimensional data: Cosine similarity works well with high-dimensional data, making it suitable for use in applications such as image processing and text classification.