Reinforcement Learning (RL) is a fascinating field of artificial intelligence that enables machines to learn and make decisions through interaction with their environment. PyTorch, a popular deep learning framework, has gained significant traction in the RL community due to its flexibility and ease of use. In this article, we will take you on a journey through the fundamental concepts and practical steps involved in building reinforcement learning models using PyTorch.

Introduction to Reinforcement Learning

Reinforcement Learning is a type of machine learning where an agent learns to make sequential decisions by interacting with an environment. It’s based on the idea of reward maximization, where the agent takes actions to maximize a cumulative reward signal over time.

Understanding RL Basics

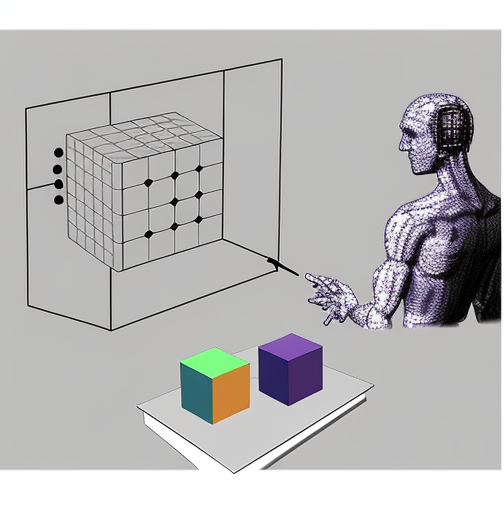

At the core of RL is the concept of an agent that interacts with an environment. The agent takes actions, and the environment responds with rewards and a new state. The agent’s objective is to learn a policy that maximizes the expected cumulative reward.

The Markov Decision Process

RL problems are often formulated as Markov Decision Processes (MDPs), which consist of states, actions, rewards, transition probabilities, and a discount factor. MDPs provide a formal framework for modeling sequential decision-making problems.

Key Terminologies in RL

Before diving deeper into PyTorch, it’s essential to familiarize yourself with key RL terminologies like state, action, reward, policy, value function, and more. These concepts will be crucial in building RL models.

Setting up your Environment

Before you can start building RL models in PyTorch, you need to set up your development environment. This includes installing the necessary libraries and configuring your Python environment.

Installing PyTorch

PyTorch is a powerful deep learning framework that provides tools for building and training neural networks efficiently. You can install PyTorch using pip or conda, depending on your preference and system.

Selecting the Right Python Environment

Choosing the right Python environment, such as Anaconda or a virtual environment, is crucial for managing dependencies and ensuring a clean development environment.

Choosing a RL Gym

OpenAI Gym is a popular toolkit for developing and comparing RL algorithms. It provides a wide range of environments to test and train your RL agents.

Defining the Problem

In RL, defining the problem is a critical first step. You need to identify the task you want your agent to perform, design a suitable reward function, and represent the environment effectively.

Identifying the Task

The task could be anything from playing a game to controlling a robot. Clearly defining the task helps in setting the direction for your RL project.

Reward Design

Designing an appropriate reward function is one of the most challenging aspects of RL. It influences how your agent learns and behaves in the environment.

Environment Representation

You must represent the environment accurately, including states, actions, and possible transitions. This representation guides your agent’s decision-making process.

Creating the Agent

The agent is the heart of any RL system. It’s responsible for taking actions, learning from its experiences, and improving its decision-making capabilities.

The Q-Learning Algorithm

Q-Learning is a fundamental RL algorithm that forms the basis for many advanced techniques. It helps the agent learn the value of taking specific actions in specific states.

Deep Q-Networks (DQNs)

Deep Q-Networks are an extension of Q-Learning that uses neural networks to approximate the Q-function. They have been highly successful in solving complex RL tasks.

Policy Gradient Methods

Policy Gradient methods take a different approach by directly learning a policy function that maps states to actions. This class of algorithms includes REINFORCE and PPO.

Building the Neural Network

To implement RL algorithms in PyTorch, you’ll need to design and train neural networks that can approximate value functions or policy functions.

Designing the Model Architecture

The architecture of your neural network plays a significant role in the success of your RL agent. It should be capable of handling the complexity of your chosen task.

Input and Output Layers

Define the input and output layers of your neural network to match the dimensions of your environment and actions.

Training the Model

Training an RL model involves the use of optimization techniques like stochastic gradient descent to update the model’s parameters and improve its performance.

Exploration vs. Exploitation

Balancing exploration and exploitation is a fundamental challenge in RL. Your agent needs to explore the environment to discover better policies while also exploiting its current knowledge to maximize rewards.

Balancing Act

Finding the right balance between exploration and exploitation is a crucial aspect of designing RL algorithms.

Epsilon-Greedy Strategy

One common strategy is the epsilon-greedy approach, where the agent chooses a random action with probability epsilon and the best-known action with probability 1 – epsilon.

Continuous vs. Discrete Action Spaces

Exploration strategies differ when dealing with continuous and discrete action spaces. You’ll need to adapt your exploration methods accordingly.

Training and Evaluation

Once your RL agent is designed, it’s time to train and evaluate its performance. This iterative process involves tweaking hyperparameters and observing how your agent performs over multiple training sessions.

Training Loop

The training loop consists of repeatedly interacting with the environment, collecting experiences, and updating the agent’s policy or value function. This process continues until your agent demonstrates the desired level of performance.

Tracking Progress

It’s crucial to monitor your agent’s progress during training. You can use metrics like the average cumulative reward, episode length, or exploration rate to assess its learning curve.

Fine-Tuning Hyperparameters

Hyperparameters such as learning rates, discount factors, and neural network architectures significantly impact your agent’s learning. Experimentation and fine-tuning are essential to achieve optimal results.

Handling Complex Environments

Many real-world RL problems involve high-dimensional states and continuous action spaces, making them more challenging. PyTorch provides tools and techniques to handle such complexities.

Handling High-Dimensional States

Deep neural networks excel at processing high-dimensional state information, making them suitable for tasks like image-based RL.

Continuous Action Spaces

When dealing with continuous action spaces, you’ll need to use specialized techniques like the Actor-Critic architecture or the DDPG algorithm to ensure smooth policy updates.

Implementing Custom Gym Environments

Sometimes, you may need to create custom Gym environments to model specific real-world problems accurately. PyTorch offers flexibility in integrating these environments into your RL pipeline.

Advanced Topics in RL

As you gain experience in RL, you can explore advanced algorithms and techniques to tackle more complex problems effectively.

Trust Region Policy Optimization (TRPO)

TRPO is an advanced policy optimization method that ensures stable policy updates by constraining the policy changes. It’s known for its robust performance.

Proximal Policy Optimization (PPO)

PPO is another popular policy optimization method that strikes a balance between stability and sample efficiency. It’s widely used in both research and practical applications.

Deep Deterministic Policy Gradient (DDPG)

DDPG is designed for continuous action spaces and employs an actor-critic architecture. It has been successful in various robotic control tasks.

Model Deployment

Once you’ve trained a successful RL agent, you’ll want to deploy it in real-world applications. This involves saving and loading trained models and integrating them into existing systems.

Saving and Loading Trained Models

PyTorch provides tools to save and load trained models, allowing you to reuse your agents for different tasks or resume training from where you left off.

Integrating RL Agents into Real-World Applications

RL agents can be integrated into various applications, from autonomous robotics to recommendation systems, to optimize decision-making processes.

Scaling Up for Production

Scaling up RL models for production involves optimizing performance, ensuring stability, and managing resources efficiently. It’s essential for deploying RL in business-critical applications.

Challenges and Pitfalls

While RL offers tremendous potential, it also comes with challenges and potential pitfalls that you should be aware of.

Overfitting and Underfitting

Overfitting occurs when an RL agent memorizes the training data and fails to generalize to new situations. Underfitting, on the other hand, results in poor learning.

Exploration Challenges

Finding effective exploration strategies, especially in complex environments, can be challenging. An inadequate exploration strategy can hinder learning.

Reward Shaping Issues

Designing the reward function is often a trial-and-error process. Poorly designed rewards can lead to suboptimal policies or learning stagnation.

Ethical Considerations

As with any AI technology, ethical considerations are essential in RL.

Bias and Fairness

RL agents can inherit biases present in training data, leading to unfair or discriminatory behavior. Ensuring fairness and addressing biases is critical.

Transparency in RL Models

Understanding how RL agents make decisions is vital for trust and accountability. Transparent models are easier to interpret and audit.

Responsible AI in RL

Developers must adhere to ethical guidelines and responsible AI practices when designing and deploying RL models, especially in applications with real-world consequences.

Future of Reinforcement Learning

Reinforcement Learning continues to evolve rapidly. Stay updated on recent advances and explore emerging applications.

Recent Advances

Stay informed about the latest RL research and breakthroughs, as they may offer more efficient algorithms or new solutions to complex problems.

OpenAI’s GPT-4 and Reinforcement Learning

The integration of RL with powerful language models like GPT-4 holds the promise of even more versatile and intelligent AI systems.

Emerging Applications

RL is finding applications in various domains, from healthcare and finance to autonomous vehicles and natural language processing. Explore how RL can solve problems in your area of interest.

Conclusion

In this comprehensive guide, we’ve explored the exciting world of Reinforcement Learning and how to build RL models using PyTorch. You’ve learned the fundamental concepts, practical steps, and advanced techniques required to embark on your RL journey. Remember that RL is both a challenging and rewarding field, and continuous learning and experimentation are key to success.

Now that you’re equipped with this knowledge, you can start your adventure in the world of RL, create intelligent agents, and tackle complex real-world problems.