Introduction: AI’s Role in Neuroscience

Recent advances in machine learning in neuroscience have brought forth remarkable predictive power. Yet, a pressing question emerges: can AI truly help us understand the brain, or does it merely predict outcomes without conveying real insight? As artificial intelligence models become more sophisticated, their ability to forecast neural activity and biological phenomena continues to grow. However, the gap between prediction and understanding is widening—posing a challenge for scientists who seek deep explanations, not just accurate forecasts.

Prediction Versus Understanding in Science

Throughout scientific history, prediction and understanding have been intertwined. Classic neuroscientists, such as Hodgkin and Huxley, not only predicted the shape of neural spikes but also explained the biological mechanics behind them. Their work distilled complex phenomena into simple, interpretable models. This union of prediction and explanation has long been cherished in neuroscience.

However, new developments like AlphaFold demonstrate a different paradigm. These AI models can predict protein structures with extraordinary accuracy but often fail to provide an explanatory framework that humans can internalize. The process of understanding—the ‘aha!’ moment—is lost. Machine learning in neuroscience is heading down a similar path, where models such as transformers analyze vast neural datasets, making precise predictions but leaving the underlying principles obscure.

The Limits of Predictive AI Models

Modern AI approaches, especially foundation models, are now employed to analyze large-scale brain data, from spike trains to calcium imaging. Companies and research labs are racing to automate scientific discovery, hoping to uncover commercially viable breakthroughs. Yet, these AI tools might offer answers without insight—predictions without simplifying the complexity of neural systems into generalizable principles.

This lack of explanatory power has led to the rise of mechanistic interpretability, a subfield dedicated to opening these black-box models and extracting human-interpretable features. For instance, researchers have attempted to map the internal workings of one model (a sparse autoencoder) onto another (a transformer trained on neural data) to reveal the underlying logic. Ironically, we now require scientific models of our scientific models to seek understanding.

The Value of Compression and Generalization

A key component in achieving understanding is compression: reducing complex data into simple, teachable theories. As Einstein famously said, a theory should be as simple as possible, but not simpler. Compressed models like the Hodgkin-Huxley equations or ring attractor models in neuroscience fit in the human mind, allowing us to mentally simulate and truly grasp them.

In contrast, machine learning in neuroscience enables AI models to store and process vast amounts of data without the necessity of compression. Their internal logic may be accurate but remains beyond human comprehension. This raises the question: if AI delivers useful predictions—such as identifying drugs or therapies—does the lack of understanding matter?

While society primarily funds science for its practical benefits, many researchers and patrons throughout history have pursued knowledge for its intrinsic beauty. However, if predictions alone yield tangible results, broader society may not prioritize understanding.

Case Study: Predictive Power Versus Explanation

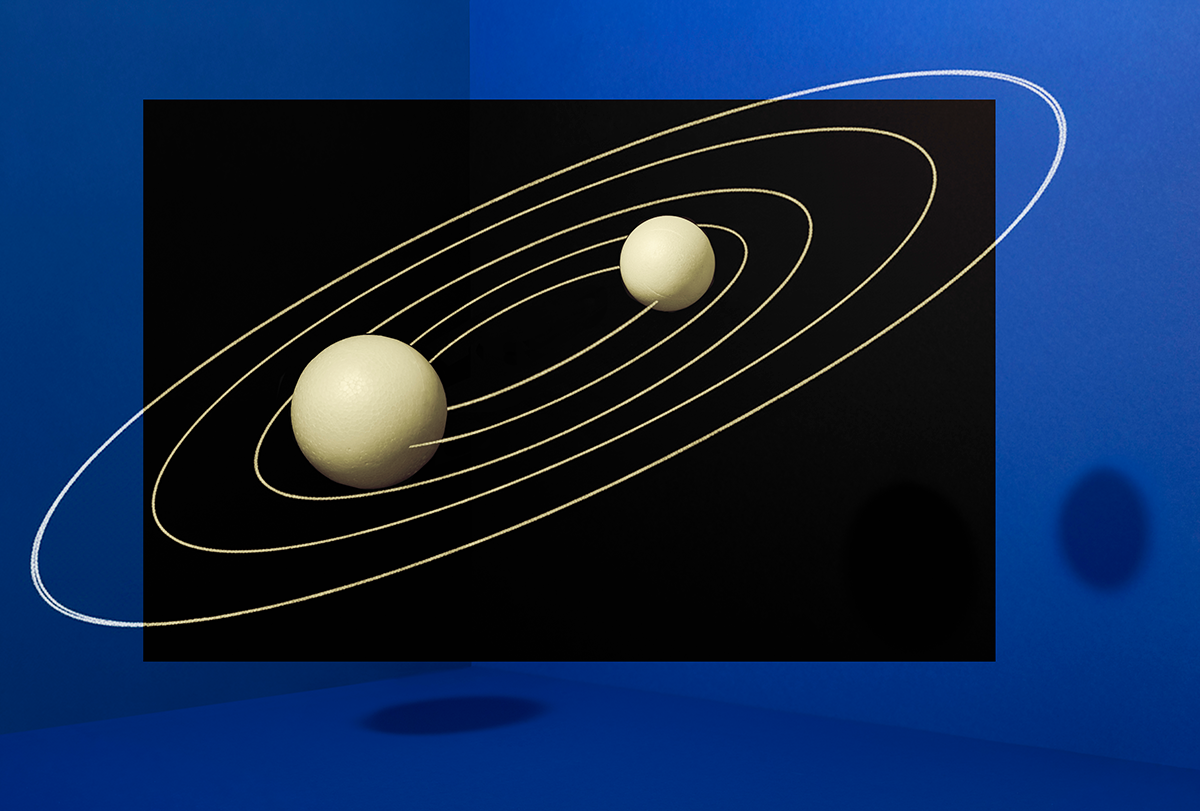

Consider a recent experiment where researchers simulated millions of planetary orbits and trained a transformer model to predict their trajectories. The model excelled at forecasting positions but failed to deduce the universal law of gravitation. Instead, it relied on a patchwork of heuristics, unable to generalize across datasets or provide the foundational principle—something essential for genuine scientific progress.

This limitation echoes historical astronomy, where geocentric models stacked epicycles to predict planetary movement with impressive accuracy, yet lacked the unifying simplicity of Newton’s laws. Likewise, in neuroscience, a transformer may predict neural activity beautifully but offer no insight into the computation occurring within circuits.

The Importance of Understanding in Neuroscience

Understanding, achieved through compression and generalization, allows for creative leaps across domains. For example, the discovery of oriented receptive fields in the visual cortex not only explained neural activity but also inspired the development of convolutional neural networks in computer vision. Similarly, drift-diffusion models bridged psychophysics and single-neuron analysis, providing frameworks that extend beyond their initial context.

AI models that memorize data without uncovering underlying structure cannot facilitate such leaps. The act of distilling vast datasets into concise theories remains, for now, a fundamentally human endeavor.

Conclusion: The Ongoing Need for Human Insight

While machine learning in neuroscience continues to revolutionize predictive capabilities, the drive for understanding remains vital. Accurate predictions may suffice in some cases, but the ability to compress, explain, and generalize is essential for true scientific advancement. Even in an era dominated by large AI models, fostering human insight and interpretation in neuroscience may be our most important role.

This article is inspired by content from Original Source. It has been rephrased for originality. Images are credited to the original source.