Inside Multimodal Neurons, The Most Advanced Neural Networks Discovered By OpenAI

Artificial Intelligence is changing the way we perceive the world and how we interact with it. With advancements in AI, there is a growing interest in understanding how neural networks operate, how they learn, and how they make decisions. OpenAI, an AI research institute, has been at the forefront of advancing the field of AI by discovering some of the most advanced neural networks to date, including Multimodal Neurons.

In this article, we’ll take a deep dive into Multimodal Neurons, their functions, how they work, and why they’re so important to the field of AI.

What Are Multimodal Neurons?

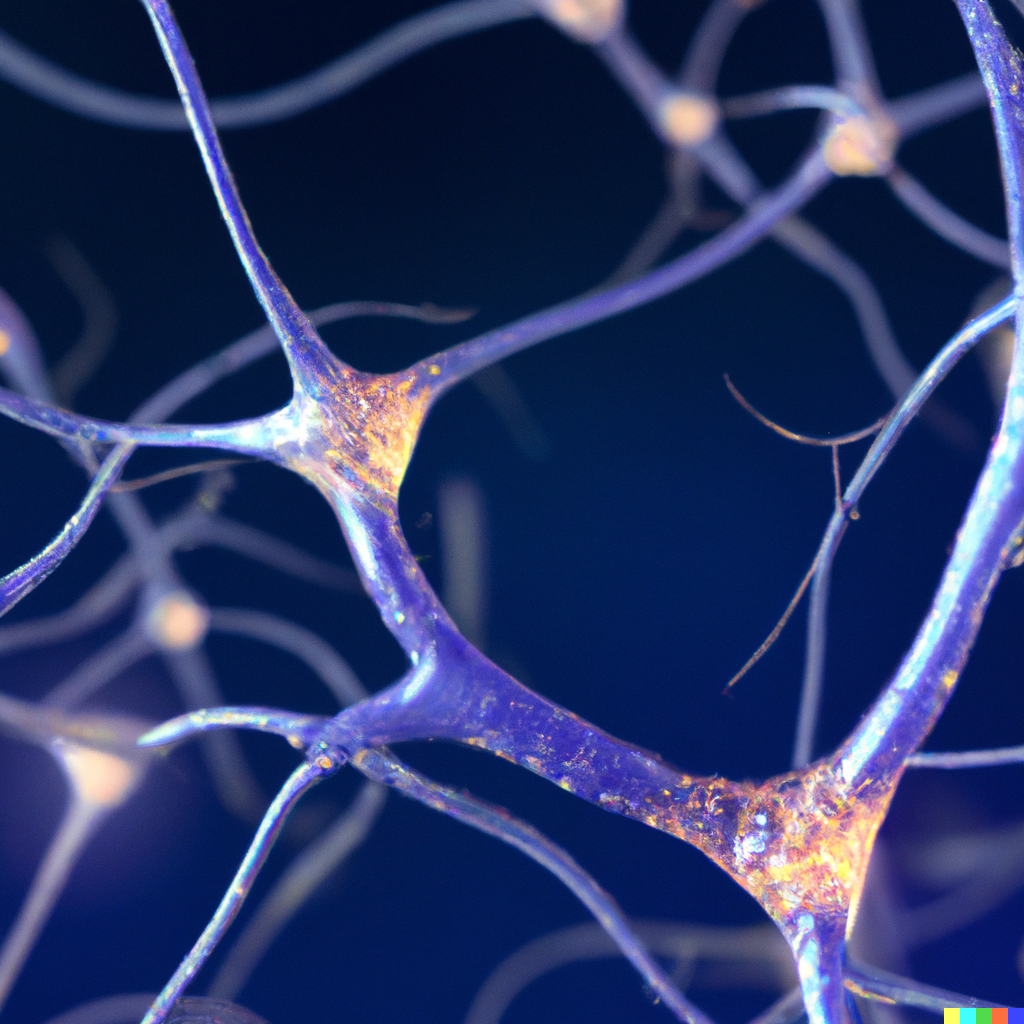

Multimodal Neurons are a type of neuron found in the brain that can process information from different senses, such as sight and sound, and combine them into a single representation. This ability to integrate information from different modalities is a critical feature of human intelligence and has been the subject of intense study in the field of AI.

Multimodal Neurons were discovered by OpenAI researchers in 2019, who trained an artificial neural network to recognize and categorize images and sounds. They found that some of the neurons in the network were responsive to both images and sounds and that they could represent both modalities in a single neuron.

How Do Multimodal Neurons Work?

Multimodal Neurons work by integrating information from different senses in a way that allows the brain to understand the world more accurately. For example, when we see a cat, we not only see its shape and color, but we also hear its meow and feel its fur. Multimodal Neurons can combine all this information into a single representation, allowing us to identify the cat more quickly and accurately.

The discovery of Multimodal Neurons is a significant breakthrough in the field of AI, as it provides insight into how the human brain integrates information from different modalities. It also has implications for the development of AI systems that can recognize and categorize information from different sources, such as images, video, and audio.

Why Are Multimodal Neurons Important?

Multimodal Neurons are important for several reasons. First, they provide insight into how the brain processes information from different modalities, which can help us develop better AI systems that can process information more accurately.

Second, Multimodal Neurons can be used to improve existing AI systems. For example, image recognition systems can be trained to recognize objects not only by their shape and color but also by their sound. This can help improve the accuracy of the system and make it more robust to changes in the environment.

Finally, Multimodal Neurons have implications for the development of AI systems that can understand language. Language is a complex modality that involves both sound and meaning. Multimodal Neurons can help us understand how the brain processes language and how we can develop AI systems that can understand language more accurately.

How Can Multimodal Neurons Be Used?

Multimodal Neurons have many potential applications in the field of AI. One of the most promising applications is in the development of AI systems that can understand natural language. By combining information from different modalities, such as sound and meaning, these systems can better understand the nuances of human language and respond more accurately to user requests.

Multimodal neurons have a wide range of potential applications in various fields such as robotics, healthcare, and even entertainment.

One of the most promising applications of multimodal neurons is in the development of robots with more human-like intelligence. By combining multiple senses such as vision, touch, and sound, robots can better understand and interact with their environment. For example, a robot equipped with multimodal neurons could navigate a room by recognizing objects through visual cues and sensing their texture and hardness through touch.

In the healthcare industry, multimodal neurons could be used to create more sophisticated medical devices. For instance, a medical device that can analyze a patient’s voice, facial expression, and body language could help diagnose neurological disorders such as Parkinson’s disease or depression.

Multimodal neurons could also be used in entertainment to create more immersive experiences for users. For example, video game developers could use multimodal neurons to create more realistic characters that can react to a player’s actions in a more lifelike manner.

Overall, the potential applications of multimodal neurons are vast, and their development could lead to significant advancements in various fields.